The viability of news has been in rapid decline since the mid-2000s. This post presents a critical analysis of how news publishers themselves helped precipitate the crisis by enthusiastically adopting Big Tech platform technologies and audience-building strategies. I show how search and social media platforms disrupted publishers’ relationships with audiences and advertisers by appropriating control over news distribution and revenue. I use Anthony Giddens’ structuration theory and Bruno Latour’s actor-network theory to explore the restructuring of the news industry by the sociotechnical practices and surveillance economics of today’s dominating platforms. Leveraging Michel Serres’ discourse on social parasitism, I present a research framework for assessing symbiotic and parasitic relationships in sociotechnical systems using historical, quantitative, and qualitative methods, and to identify where news publishers still have agency to begin resolving the crisis. And I suggest the urgency of this research framework as publishers rapidly adopt new AI technologies.

Decolonial AI: Decolonial Theory as Sociotechnical Foresight in Artificial Intelligence – Annotation & Notes

This paper looks at advances in artificial intelligence through the lens of critical science, post-colonial, and decolonial theory. The authors acknowledge the positive potential for AI technologies, but use this paper to highlight its considerable risks, especially for vulnerable populations. They call for a proactive approach to be adopted by AI communities using decolonial theories that use historical hindsight to identify patterns of power that remain relevant – perhaps more than ever – in the rapid advance of AI technologies.

Fueling the AdTech Machine: Google Analytics and the Commodification of Personal Data

This paper concerns the role of online analytics in facilitating the rise of today's ubiquitous programmatic advertising, referred to herein as "AdTech." Most criticism of AdTech has focused on online tracking which captures user data, and digital advertising which exploits it for commercial purposes. Almost entirely lost in the discussion is the role of analytics platforms, which process personal data and make it actionable for targeted advertising. I argue that the role of analytics is key to the rise of AdTech, and has not been given the critical attention it deserves. I wrote this paper while pursuing my research as a PhD student at the University of Illinois School of Information Sciences. It has not been peer-reviewed or published elsewhere, and I’m posting it here to invite comments, criticism, and suggestions. Please feel free to send me email at jackb at illinois dot edu, or twitter message me @ jackbrighton.

Critical data modeling and the basic representation model – Annotation & Notes

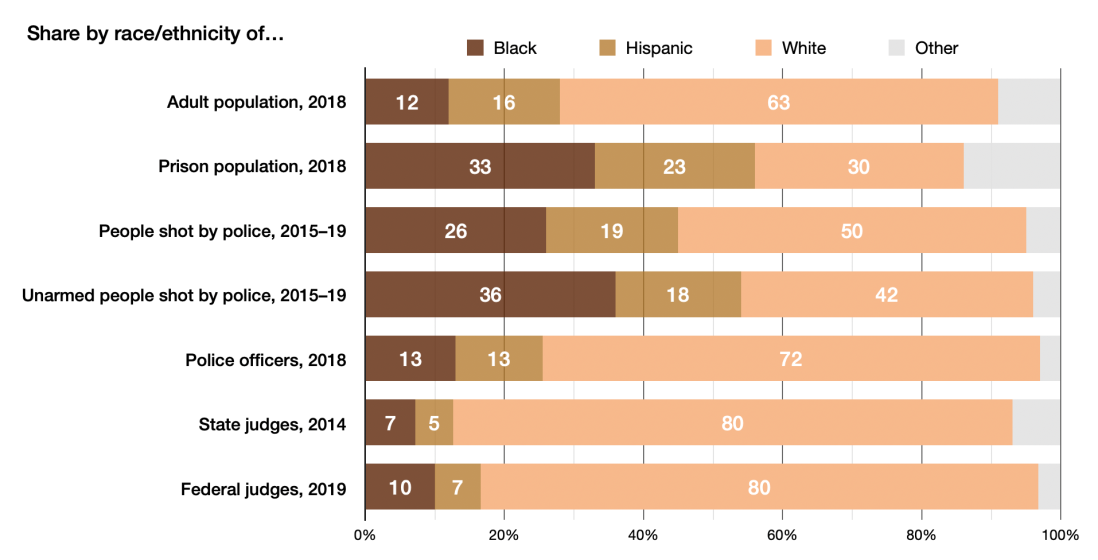

Data models are foundational to information processing, and in the digital world they stand in for the real world. When machines are used to make algorithmically-informed decisions, their algorithms are informed by the data models they use. And the data structured by data models is numerical of necessity, since machines must perform logical operations, and not creative interpretations. It follows that data used in machine operations are machine-language translations of real-world phenomena, expressed in a data model designed for efficient processing. It should not be surprising then that as information systems increasingly make decisions that affect people and communities, their operations are in a very direct sense an extension of the messy human world. This has resulted in information systems that reflect human racism, sexism, and many otherisms, with real-world harm to individuals and communities. But given the black-box nature of “machine learning” algorithms, how do we know what happens inside the black box? How can we document machine bias so as to design algorithms that don’t perpetuate social harms?

The “Privacy Paradox” and Our Expectations of Online Privacy

The analysis presented here is based on my review of existing research on privacy expectations of people who create online content. This analysis concerns the full range of user interactions on what we used to call Web 2.0 platforms, focusing on social media systems like Facebook, Twitter, Reddit, Instagram, and Amazon. User interactions include posting original content (text, photos, videos, memes, etc.), and commenting on content posted by others. Reviews on Amazon and comments on news websites count as online content in this analysis. Photos uploaded to photo-sharing sites and original videos posted to YouTube also count. Anything in any format created by an individual from their own original thought and creative energy, and subsequently posted by the individual on social media platforms, counts as online content. In most instances the online content or interaction contains or is traceable to personally identifiable information, even if this is unintended by the content creator.

Information and Communication Technology and Society – Annotation & Notes

In this article Fuchs introduces “Critical Internet Theory” as a foundation for analyzing the Internet and society based on a Marxian critique. He illustrates Critical Internet Theory (hereinafter CIT for brevity) using the emergence of the so-called Web 2.0 as an Internet gift commodity strategy, wherein users produce content on free platforms, which commodify the content to increase their advertising revenues. Fuchs introduces the concept of the “Internet prosumer commodity” to describe this “free” exchange of labor and value. This strategy, he writes, “functions as a legitimizing ideology.”

The Panoptic Sort: A Political Economy of Personal Information, by Oscar H. Gandy, Jr. – Book Review

The academic field of surveillance studies has (thankfully in my view) become more crowded during the past few years in response to the increasing use of data technologies for social control. In the early 1990s, when some of us (e.g. me) were naively celebrating the liberating potential of the internet, Oscar H. Gandy, Jr. was critically examining earlier incarnations of data systems and practices that contributed to the entrenchment of existing systems of domination and social injustice. First published in 1993, his book The Panoptic Sort was a groundbreaking account of the history and rationalization of surveillance in service of institutional control and corporate profit at the expense of individual privacy and autonomy. In the a second edition, published by Oxford University press in 2021, Gandy updates his original book for the context of today’s increasingly ubiquitous technologies that collect, process, and commodify personal information for instrumental use by corporate interests.

The Application of Artificial Intelligence to Journalism: An Analysis of Academic Production – Annotation & Notes

This paper presents a summary of academic research on AI use in journalism, based on the authors’ review of 358 texts published between 2010 and January 2021. The materials they reviewed were found through academic databases including Scopus and Web of Science, in addition to Google Scholar. Most of the articles were published in English, and the majority was from the United States. Given significant developments with AI, and AI in journalism, since 2021, this paper is really a snapshot of research published for the period covered. The authors do note a rapid increase in research until 2019, with a dropoff in 2020 presumably from disruptions of the COVID-19 pandemic.

Dealing with Digital Intermediaries: A Case Study of the Relations between Publishers and Platforms – Annotation & Notes

In this article, published in 2017 in the journal New Media & Society, Nielsen and Ganter report on a series of interviews with editors, senior management, and product developers at a large, well-established European news media organization regarding their experiences and perspective on relationships with the main digital platforms that are now central to news distribution, namely Facebook and Google. This paper documents the asymmetrical power relationship between a large, well-known and successful news organization, and the digital platforms on which it now depends for audience reach. And it points to a gap in similar research on smaller, more precarious news organizations.

Period, Theme Event: Locating Information History in History

When we focus primarily on innovations in information technology, we risk flirting with technological determinism while forgetting about the social context. The question for information historians is not “how did this information technology come about,” but “how can we explore history by examining social practices around information and its infrastructures.” But this question is so general it doesn’t provide much of an entry point for actual research. To address the problem of “where do we start,” Alistair Black and Bonnie Mak (2020) propose three specific lenses as an organizing paradigm: Period, Theme, and Event.