I posted this short piece in 2015 on Medium where you can still find it. I'm republishing it here because, somewhat ironically given its topic of preservation, I'm less than fully confident that Medium will still be around in a few years, at least in its current "open" form. Exposure to archival practice came from my struggles as a media producer in the emerging digital age. I began designing websites with streaming and downloadable multimedia in 1997, and quickly realized that without an archival plan the situation was becoming hopeless. I saw how quickly technology was changing, and suspected that the media we published on the web at that time would be unplayable within a few years. And the challenge of preserving the audiovisual record has only grown larger since I wrote this in 2015.

Tag: Metadata

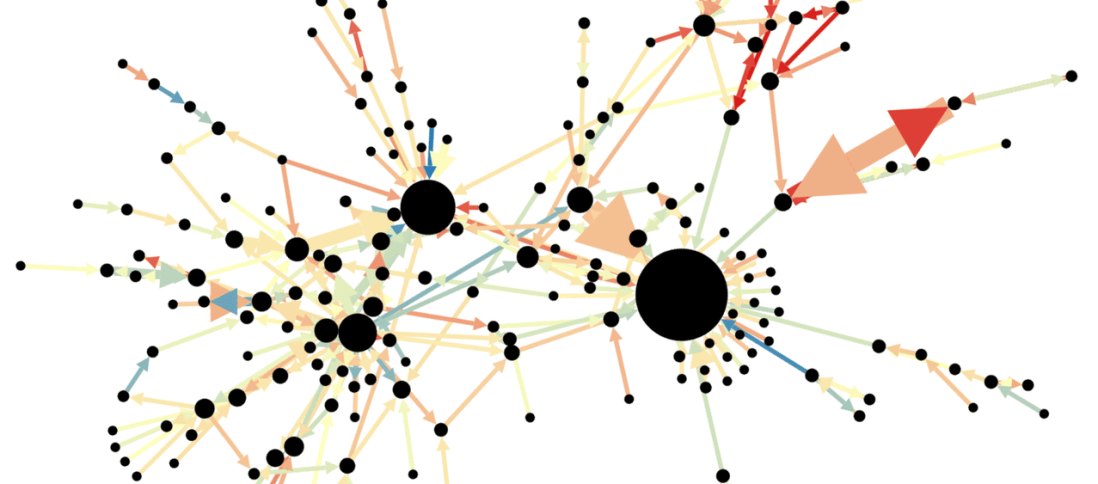

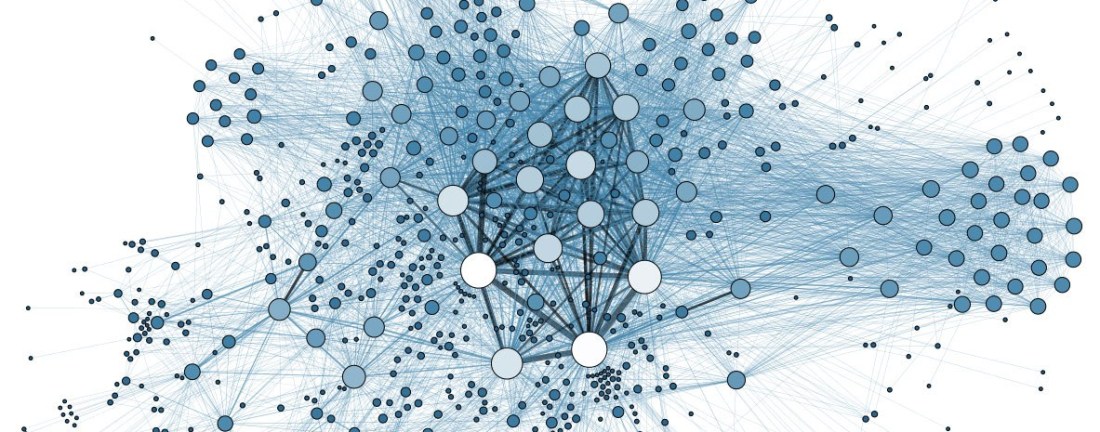

Annotation: Mining the Social Web

While mining the Information Science Virtual Library for academic papers on "social media" and "data mining," I came across Matthew Russell's O'Reilly book Mining the Social Web: Data Mining Facebook, Twitter, Linkedin, Google+, Github, and More. The 2nd edition was published in October 2013, with a 3rd edition scheduled for publication next month. Because the book covers the specific techniques I'm after concerning data mining and analysis of social media, I decided to pull the trigger and buy the book right now.

The book is basically a tutorial on data mining social media sites using Python. Alas all the source code it references is in Python 2.7 and I've been working with version 3.6, but that's fine. It also covers using IPython Notebooks and even begins with a guide to setting things up on a virtual server. I'll probably wait to actually do that until I see what's new in the 3rd edition. But the book definitely makes the final cut for my annotated bibliography. With that as a given, I thought it would be useful to get started with the first annotation.

Social Media Data Collection, Processing, and Use in Research, Marketing, and Political Communication

I've been spending time learning Python since January, and it's creating new problems. For example, suddenly I want to do things with Python. I want to write a program to process titles and filenames of media archives records from an Excel spreadsheet, and find the matching media files which are stored on a network drive. I need to read a few thousand PBCore XML records and convert them to JSON. I want to take the JSON output from Google Speech-to-Text transcripts, and convert it to WebVTT files. But I can take on just one project right now, and here it is.